We've all been there. You're sitting in the glow of your desktop, a vision for the perfect sequence playing out in your head. You can see it so clearly: that one specific shot where the light hit a subject's face just right, or that perfect, engaging reaction from your last street vlog that you just know will make the entire video click. But then, you open up your 4TB drive, and reality hits.

In this article

- The Problem: Storage is a Graveyard

- The Solution: A Large Visual Memory Model

- What is Semantic Video Search?

- How It Works: The Second Brain for Your Camera

- Use Case: Finding the Needle in the Haystack

- Natural Language Queries: Chat with Your Library

- Stop Scrubbing. Start Creating.

The Problem: Storage is a Graveyard

The screen stares back at you with an ocean of meaningless labels. Files named DCIM_Oct_22 or IMG_9482.mov tell you absolutely nothing about the

possibility and potential hidden inside them. To find that one three-second golden clip, you're forced into what every creator dreads: the Timeline

Slump.

It is exhausting, bad for your eyes and it's a waste of your creative energy as you manually scrub and fast-forward through a digital haystack. You're manually scanning through 500GB of raw footage—some of it from three months ago—praying for the frame you want to flash by as your eyes grow tired and your patience wears thin. Usually, the slump wins. You either give up entirely or settle for a good enough shot when you know the perfect one is somewhere there. It's a heartbreaking and unnecessary compromise because you know the perfect shot is in there somewhere, buried under a mountain of data, just waiting to be found.

Your footage shouldn't be a graveyard. It should be a forever-available resource.

At Memories.ai, we believe you shouldn't have to watch your footage just to find it. You should be able to talk to it and ask for it with no effort.

Standard cloud storage is a passive archive. It holds your data, but it doesn't know your data.

The Solution: A Large Visual Memory Model

This is where Semantic Video Analysis changes the game. Unlike traditional tools that rely on text metadata, the Memories.ai Large Visual Memory Model actually watches your videos.

It understands context, objects, and emotions. It doesn't just see blue and orange pixels; it recognizes a cinematic sunset over the Pacific Ocean or fast-changing actions.

Imagine typing these queries into your personal video library:

- Find the clips where the dog is wearing a red harness.

- Get all the B-roll of neon signs in Tokyo with a cyberpunk vibe.

- Show me every time I mentioned the 'Q4 marketing budget' in a meeting.

What is Semantic Video Search?

Traditional search is lexical—it looks for exact matches in filenames or manual tags. If you didn't name a file sunset, the computer doesn't know

there's a sunset in it.

Semantic video search is different. At Memories.ai, we don't just index filenames; we understand the pixels. Using our Large Visual Memory Model (LVMM), the AI watches your footage and encodes it into a web of interconnected memories. It understands context, action, and emotion.

Think of it like this: Traditional search is a librarian who only knows the titles of books. Memories.ai is the librarian who has read every page of every book in your library and remembers exactly what happened in chapter four.

How It Works: The Second Brain for Your Camera

When you upload footage to the Memories.ai platform, our world-model encoder builds a Visual Index.

- Temporal Understanding: It tracks how actions changed and evolve (e.g., the difference between a person sitting down and a person standing up).

- Spatial Logic: It understands where objects are located within the frame and their relationship to one another.

- Natural Language Mapping: It bridges the gap between how humans speak and how cameras record.

Use Case: Finding the Needle in the Haystack

Imagine you've just returned from a month-long production trip. You have 50GB of raw 4K footage from a dozen different YouTube-style vlogs. You need one specific b-roll shot for your intro: That moment the sun hit the mountain peak just before the clouds moved in.

- The Old Way: Opening every folder, previewing clips, and dragging the playhead back and forth for 4 hours+.

- The Memories.ai Way: You type that exact phrase into the search bar and ask the AI agent to pull it out for you and or even straight up finish editing the entire vlog for you.

Because our AI understands visual concepts, it doesn't need a tag. It identifies the mountain peak, recognizes the sun hitting, the lighting change, and ignores the clips where it was already cloudy. Within seconds, it pulls the exact timestamp from a video buried three levels deep in your folder.

Whether it's finding a specific reaction in a 2-hour podcast or a specific car driving past a landmark in a 4 hour city vlog, the needle is now just a search query away.

Natural Language Queries: Chat with Your Library

The breakthrough isn't just in finding; it's also in interacting. With Memories.ai App - Chat & Agents feature, you're moving far beyond a clunky search bar. You're actually having a conversation with your archives.

You can ask:

- "Show me all the times the guest laughed during the interview."

- "Find the clips of the red sports car from my San Francisco vlog."

- "What were the key three points we discussed in the whiteboard session yesterday?"

The AI Agent scans your visual memory, retrieves the relevant sessions, and presents them to you as a curated list. It's like having an assistant editor sitting right next to you—someone who never sleeps, never forgets, and has a perfect, 24/7 recall of every single frame you've ever captured.

Stop Scrubbing. Start Creating.

The first cut is often the hardest part of the edit. By using a Video Analyzer to act as your Assistant Editor, you eliminate the search phase entirely. You can move straight from raw footage to storytelling, using our AI Chat & Agents to pull your highlights or even help you structure your entire vlog.

The future of video isn't about having more storage; it's about having better memory. Your footage is a goldmine of insights and content—you just need a way to query it. Turn your hard drive from a black hole into a searchable database today.

Ready to turn your video library into a searchable database? Explore the Memories.ai Video Analyzer

Read more

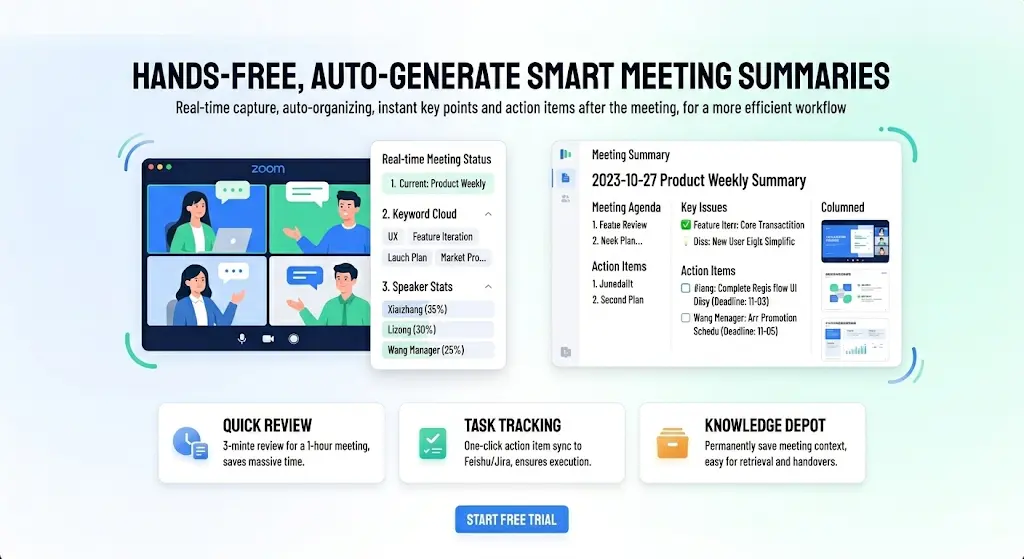

Best Meeting Transcription Software in 2026 (Tested & Compared)

We tested 8 meeting transcription tools head-to-head. Compare accuracy, AI summaries, pricing, and integrations to find the best fit for your team.

Best AI Note Taker for Zoom in 2026 — Auto Summary, Action Items & Smart Search

Compare the 6 best AI note takers for Zoom meetings. Get automatic transcription, summaries, and action items. See how they stack up on accuracy, features, and price.

How to Convert Video to Text with Visual Descriptions (2026 Guide)

In a world where 80% of internet traffic is video, text-only transcripts leave out half the story. This guide shows you how to use [Memories.ai](https://memories.ai/app) to convert video and audio into text that includes visual narratives, speaker recognition, and customized summaries.